Synopsis

Artificial intelligence and machine learning are currently considered as the most important universal technology of our era, like electricity and the combustion engine; their applications now extend into almost every industry and research domain. Particularly deep learning has led to many recent breakthroughs in various domains, such as computer vision, natural language processing, and speech recognition. The goal of this course is to introduce key concepts of deep learning which will be exemplified with applications in communications, such as an entirely neural network-based communications system that does not use any traditional algorithm. For now, deep learning for communications is a novel field that offers many attractive interdisciplinary research questions at the interface between machine learning, communications engineering, and information theory. The course is hence a great opportunity to learn about the cutting-edge research in communications and deep learning. A strong focus is put on practical implementation with state-of-the-art deep learning libraries and each lecture will be accompanied by a Jupyter notebook with code examples.

Contents and Educational Objectives

1. Introduction (JH)

" Hype around artificial intelligence and deep learning

" Some historical remarks

" Role of machine learning for future communications systems

" Course environment: Git, Docker, Jupyter

2. A Primer on Deep Learning (JH)

" Neural networks

" Universal approximation theorem & Approximation and estimation bound

" Backpropagation

" Stochastic gradient descent

" Gradient descent optimization algorithms

" Capacity, overfitting, and underfitting

" Regularization

3. Introduction to Python and Tensorflow (SD&SC)

4. Deep learning-based User Localization (SD)

5. Convolutional Neural Networks: Modulation Classification (JH)

6. Deep Unfolding: Neural Belief Propagation (SC)

7. Recurrent neural networks: Decoding of Convolutional Codes (StB)

8. Residual Nets: Deep MIMO Detection (JH)

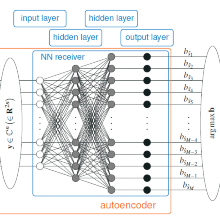

9. Autoencoders: Learning to Communicate (SC)

10. Using neural networks in Software-defined Radio (SD)

11. Generative Adversarial Networks: Channel Modeling (SD)

12. Open Ends, Summary and Outlook (JH)

Current Research

Bit-wise Autoencoder Communication System Training

This video shows the training process of a bit-wise autoencoder communications system. The system tries to find optimal signals to transmit k=4 bit within n=2 real valued channel uses at the trained SNR of 3.85dB. On the left side one can see the currently used constellation and on the right side one can see the mutual information obtained at receiver side. The bottom heat map plots show the current decision regions of the learned receiver, where pink regions indicate a strong decision towards bit 0 and blue regions indicate a strong decision towards bit 1. It can be seen, that the system finally converges towards a constellation that differs from classical modulation schemes, like the comparable 16-QAM constellation, and is thereby able to transmit more information at this operational point. While these gains are well known in communications research as so called shaping gains, the possible game changer of a neural network based autoencoder system is, that it is able to optimize its signals over every kind of channel, including all unknown insufficiencies using data based training strategies.

For more information see the paper "Trainable Communication Systems: Concepts and Prototype" by S. Cammerer, F. A. Aoudia, S. Dörner, M. Stark, J. Hoydis and S. ten Brink at ieeexplore.ieee.org where these results are based on.

Course Information

3 ECTS Credits (beginning with SS2020)

Lectures

| Lecturer | Dr.-Ing. Jakob Hoydis,Sebastian Cammerer, Sebastian Dörner and Prof. Dr.-Ing. Stephan ten Brink |

| Time Slot | Thursday, 14:00-15:30 |

| Lecture Hall | 2.348 (ETI2) |

| Weekly Credit Hours | 2 |

Exercises

| Lecturer | Sebastian Cammerer and Sebastian Dörner and Tim Uhlemann |

| Time Slot | TBD |

| Lecture Hall | 2.348 (ETI2) |

| Weekly Credit Hours | TBD, will be interleaved with lecture |

Jakob Hoydis

Dr.-Ing.

Stephan ten Brink

Prof. Dr.-Ing.Director